Everyone is selling GEO and AEO right now as if someone found a new button.

A button that gets you into ChatGPT.

A button that increases your chances of being cited.

A button that gives marketers and SEO teams that old familiar feeling back: take an action, wait, watch a metric go up.

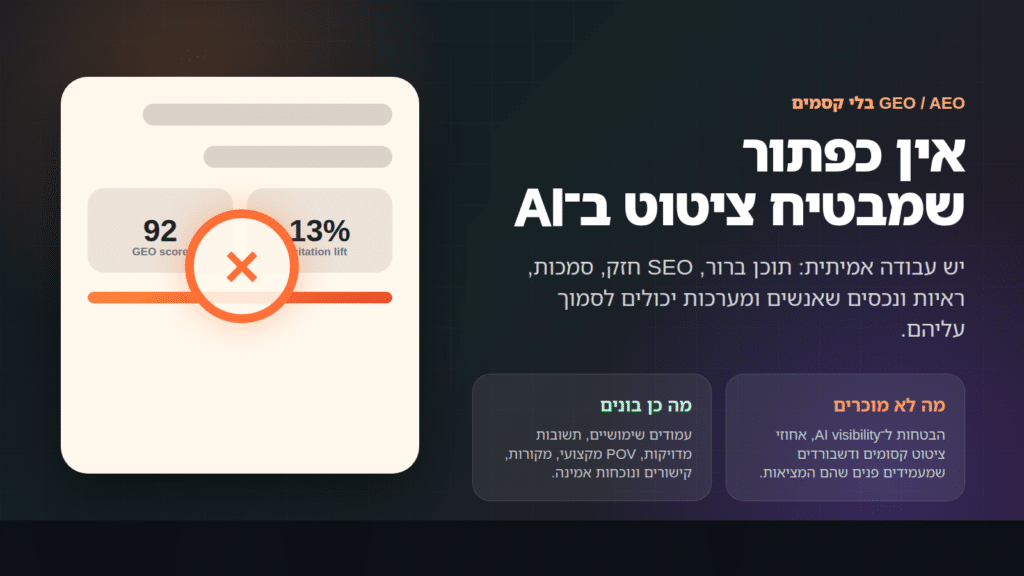

But there is no button like that.

And that is exactly where the confusion begins.

The problem with AI engines is not that they are too chaotic to understand content. Quite the opposite. That chaos is a big part of why language models exist. They were built to read imperfect language: articles, forums, documents, questions, answers, partial phrasing, contradictions, repetition, and texts written at every possible level of order.

The real problem is an industry trying to sell certainty where certainty does not fully exist.

Another dashboard.

Another checklist.

Another promise about AI visibility.

Another graph that looks like it explains why one model cited one source instead of another.

That feels reassuring. It looks professional. It also does not always tell the full story.

Language models do not need us to hold their hand

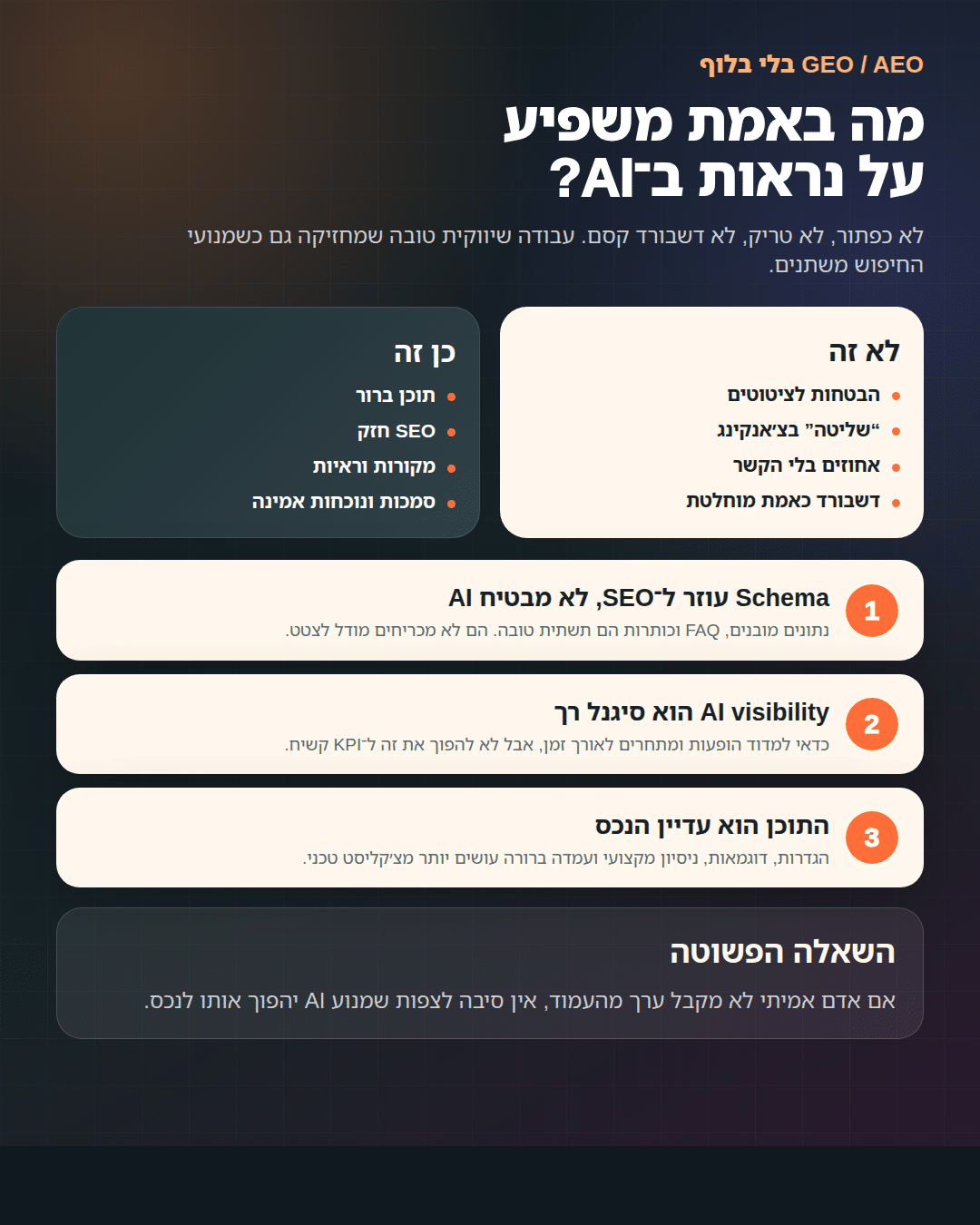

One of the common arguments around GEO is that content has to be specially "packaged" for AI: split into chunks, add questions and answers, organize the headings, add schema, write short clear definitions.

Some of that is true.

But let's be honest: most of those things are not new. They are good SEO. They are good UX. They are good writing. They help people understand a page, so they can also help systems that are trying to understand text.

That is not the same as saying we found a magic mechanism for getting cited in ChatGPT.

Language models are not waiting for schema to understand a sentence. They do not need an FAQ to recognize an answer. They do not read only neat tables and ignore everything else. They read text, recognize context, and generate an answer from what is retrieved for them and what was already learned during training.

So yes, structure helps.

But not because the model is helpless without it.

Structure helps because clear content is a better asset.

The confusion starts when good practices are sold like new magic

We do not have a problem with GEO.

We have a problem with the way it is being sold.

If the recommendation is to write a clear answer near the top of the page, add sources, organize your heading hierarchy, build better service pages, and strengthen authority around relevant topics and entities, we are fully in.

That is solid work.

If the promise is a "method that guarantees AI visibility," "control over chunking," "predicted citation rates," or a dashboard that shows exactly why ChatGPT picked one brand over another, that is where you need to stop.

Because none of us has full access to what happens inside every model, every prompt, every language, every retrieval layer, every version, and every single day.

Even when the content is strong, the model may still not cite it.

Even when the page is organized, another answer may win.

Even when a brand shows up today, it may disappear tomorrow.

That does not mean you do nothing.

It means you do not sell control where what really exists is probability.

So what does work?

What worked before the buzz got a new name.

Clear content.

Pages that explain something all the way through.

Definitions you can understand without guessing what the writer meant.

Real examples.

Professional experience that does not sound like generic output from a content machine.

Evidence, sources, names, processes, and context.

An information architecture that makes it clear which page is the main asset, which page supports it, and what should not compete for the same topic.

And consistency too: across the site, the brand, the profiles, the mentions, the links, and the places where the market actually lives.

None of that is a "GEO trick."

It is just good work.

And it is less flashy. It is much easier to sell a tool that shows a score than to sit on a service page and ask: does the person who actually needs this service understand within ten seconds why they are here?

But that is where the value is.

What Strudel does not sell

We do not sell a promise of control over AI visibility.

No serious operator can promise that.

We do not sell "13% higher citation odds" without asking where that number came from, in which model, in which market, against which competitors, and under what methodology.

We do not sell the feeling that the dashboard is reality.

A dashboard is a tool. Sometimes an important tool. But it is not the truth itself.

AI visibility is a soft signal. It is worth measuring, but carefully, over time, and with a lot of humility. It does not replace classic SEO, keyword research, strong content, links, reputation, or a real understanding of the client.

What we do instead

We do strong SEO.

Not nostalgic SEO that pretends AI does not exist, but SEO that understands the game got bigger.

We build clear service pages.

We create content with a point of view, not another summary of what is already written in the top ten results.

We strengthen consistency around the brand, the services, the people, and the core concepts.

We organize the site so not every article competes with every service page.

We write answers a real person would be glad to receive, not paragraphs written only to feed a crawler.

And we show up in places with real professional context: relevant sites, communities, mentions, collaborations, high-quality guest content, and sources the market already trusts.

And yes, we measure.

But we measure carefully.

We check where the brand appears in AI answers, which competitors keep showing up, which pages get cited, which phrasing works better, and what changes over time. Not to pretend we have a remote control for the model, but to detect direction.

In the end, the question has not changed

Is your content worth reading?

Is it clearer than the competition?

Does it contain real experience?

Does it help someone make a decision?

Is it built so both people and systems can understand what matters inside it?

If the answer is yes, you are in a better position.

Not because GEO gave you control back.

But because you stopped chasing the illusion of control and went back to building assets people and systems can actually trust.

When the buzz cools down, that will probably be the only thing still left on the table.